Avoiding Frankenstein and Fiefdoms: Using Data as a Strategic Asset in Capital Construction Programs

Vice President at Enstoa

Just because you have data doesn’t mean you’re using it as a strategic asset.

That’s an important distinction. We observe some organizations believe that just because they have enterprise applications, codified processes, and collect heaps of data that they’re exercising all of their enterprise assets to achieve their strategic goals. And yet they aren’t.

Let’s explore why.

Lack of integrated applications require analysts to reconstruct what’s already happened

In order for organizations to take advantage of opportunities and address challenges quickly, they need ready access to the data that will help them formulate and execute a plan. What we observe, however, is that organizations often operate like a bank without an ATM, handing over your money a month after you requested it.

For many organizations, getting data takes a long time—either in terms of the absolute amount of time or the number of man-hours required… or, in the worst case, both. This puts at risk the organization’s ability to respond to opportunities and challenges—and monitor their response—effectively and efficiently.

It takes a long time to track down the relevant data and corral it because the posse of analysts tasked with doing so must lasso data from all over. We often see these analysts rounding up information from a herd of disconnected applications, random spreadsheets, and various databases dotting the enterprise landscape. A client recently referred to a collection of such systems and data sources tucked away in nooks and crannies as a “cornucopia of crapola.”

Being able to use all of your data quickly makes it a strategic asset for your business decisions. If key information is spread across the organization in silos, users aren’t able to access it rapidly or, worse, may not even be aware it exists.

Among organizations with large capital construction programs, few things are as sacred as the monthly report. How the monthly report gets created is a good litmus test for determining if the organization has the foundations that enable it to use its data as a strategic asset.

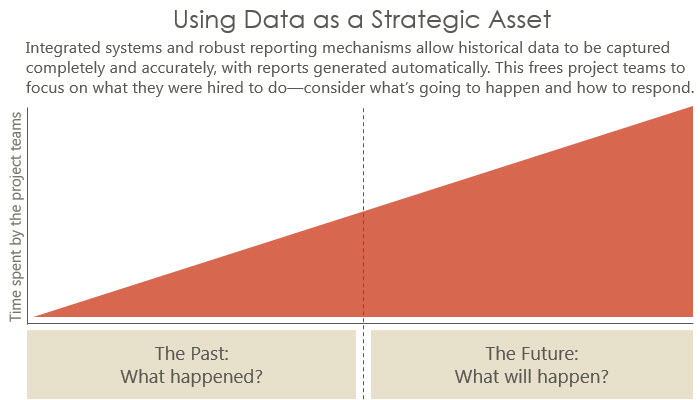

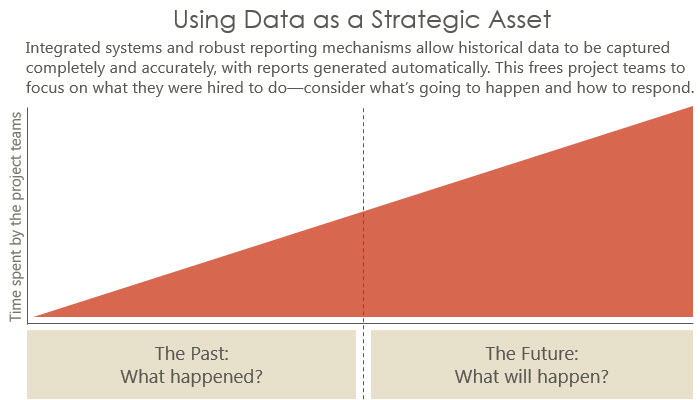

Organizations creating these monthly reports in a largely manual fashion from a cornucopia of data sources suffer from the Frankenstein Effect: Analysts spending most of their time finding the right pieces of data across several severed sources, splicing them together, and forming the complete body of information to explain what’s already happened. The result is that analysts spend much of their time on low-value work. And by the time they deliver reports, they’re already stale with data that’s weeks-old. A lamentably small portion of their time is spent on analyzing the data, predicting what’s going to happen, and how to respond.

The opposite should be true. Analysts should be spending most of their time doing the things they were hired to do—analyzing the information and considering what’s going to happen in the future. Determining what’s already happened with your capital construction program should be available at the click of a button, not as a result of hundreds of man-hours of manual calculation.

And to be clear, it’s not science fiction. We’ve seen many organizations mature to the point where such automation is business as usual. To achieve this, however, organizations need integrated processes supported by integrated systems and reporting mechanisms that automatically process data, calculate metrics, and generate monthly reports.

Data fiefdoms arise when applications and processes don’t support project teams

Have you ever heard something like this: “See Bob about forecasting. He developed an Access database, and he’s the only one who really knows how it works” or “If you need space information, see Jane the Space Queen.” These are indications data fiefdoms exist across the organization with just a few people lording over who has access to the data and its meaning.

Such fiefdoms complicate analysts’ efforts to gather the data they need to analyze. Common reasons we observe for these fiefdoms appearing include:

- The organization lacks integrated applications, resulting in teams developing rogue mechanisms to track the data they need.

- The applications or processes provided by the organization don’t meet the needs of project teams. In response, teams develop ad hoc tools and databases so they can do their work.

What are you experiencing in your organization?

Are you living in a reality where the “Frankenstein Effect” is in full force and you require a swat team to pull together a true view of your capital project or program?

Have you been successful in doing away with data fiefdoms and democratizing data?

Steven Mattson Hayhurst serves as the Vice President of Data Services at Enstoa, a technology consulting firm helping organizations with large capital programs leverage technology, data, and processes to improve project planning and delivery.

View Steven’s activity on Twitter at https://twitter.com/mattsonhayhurst.

Just because you have data doesn’t mean you’re using it as a strategic asset.

That’s an important distinction. We observe some organizations believe that just because they have enterprise applications, codified processes, and collect heaps of data that they’re exercising all of their enterprise assets to achieve their strategic goals. And yet they aren’t.

Let’s explore why.

Lack of integrated applications require analysts to reconstruct what’s already happened

In order for organizations to take advantage of opportunities and address challenges quickly, they need ready access to the data that will help them formulate and execute a plan. What we observe, however, is that organizations often operate like a bank without an ATM, handing over your money a month after you requested it.

For many organizations, getting data takes a long time—either in terms of the absolute amount of time or the number of man-hours required… or, in the worst case, both. This puts at risk the organization’s ability to respond to opportunities and challenges—and monitor their response—effectively and efficiently.

It takes a long time to track down the relevant data and corral it because the posse of analysts tasked with doing so must lasso data from all over. We often see these analysts rounding up information from a herd of disconnected applications, random spreadsheets, and various databases dotting the enterprise landscape. A client recently referred to a collection of such systems and data sources tucked away in nooks and crannies as a “cornucopia of crapola.”

Being able to use all of your data quickly makes it a strategic asset for your business decisions. If key information is spread across the organization in silos, users aren’t able to access it rapidly or, worse, may not even be aware it exists.

Among organizations with large capital construction programs, few things are as sacred as the monthly report. How the monthly report gets created is a good litmus test for determining if the organization has the foundations that enable it to use its data as a strategic asset.

Organizations creating these monthly reports in a largely manual fashion from a cornucopia of data sources suffer from the Frankenstein Effect: Analysts spending most of their time finding the right pieces of data across several severed sources, splicing them together, and forming the complete body of information to explain what’s already happened. The result is that analysts spend much of their time on low-value work. And by the time they deliver reports, they’re already stale with data that’s weeks-old. A lamentably small portion of their time is spent on analyzing the data, predicting what’s going to happen, and how to respond.

The opposite should be true. Analysts should be spending most of their time doing the things they were hired to do—analyzing the information and considering what’s going to happen in the future. Determining what’s already happened with your capital construction program should be available at the click of a button, not as a result of hundreds of man-hours of manual calculation.

And to be clear, it’s not science fiction. We’ve seen many organizations mature to the point where such automation is business as usual. To achieve this, however, organizations need integrated processes supported by integrated systems and reporting mechanisms that automatically process data, calculate metrics, and generate monthly reports.

Data fiefdoms arise when applications and processes don’t support project teams

Have you ever heard something like this: “See Bob about forecasting. He developed an Access database, and he’s the only one who really knows how it works” or “If you need space information, see Jane the Space Queen.” These are indications data fiefdoms exist across the organization with just a few people lording over who has access to the data and its meaning.

Such fiefdoms complicate analysts’ efforts to gather the data they need to analyze. Common reasons we observe for these fiefdoms appearing include:

- The organization lacks integrated applications, resulting in teams developing rogue mechanisms to track the data they need.

- The applications or processes provided by the organization don’t meet the needs of project teams. In response, teams develop ad hoc tools and databases so they can do their work.

What are you experiencing in your organization?

Are you living in a reality where the “Frankenstein Effect” is in full force and you require a swat team to pull together a true view of your capital project or program?

Have you been successful in doing away with data fiefdoms and democratizing data?

Steven Mattson Hayhurst serves as the Vice President of Data Services at Enstoa, a technology consulting firm helping organizations with large capital programs leverage technology, data, and processes to improve project planning and delivery.

View Steven’s activity on Twitter at https://twitter.com/mattsonhayhurst.